I haven’t found a good checker since Xenu, which was last updated for Windows XP. it was mentioned in the marketing newsletter and B. (Personally I’d rather been hoping it was possible since A. So unless approves their user agent, it seems it may not be possible to use the free version of their utility on.

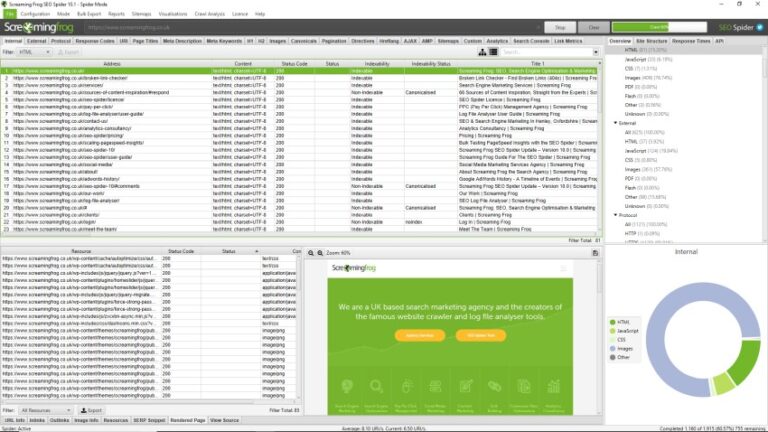

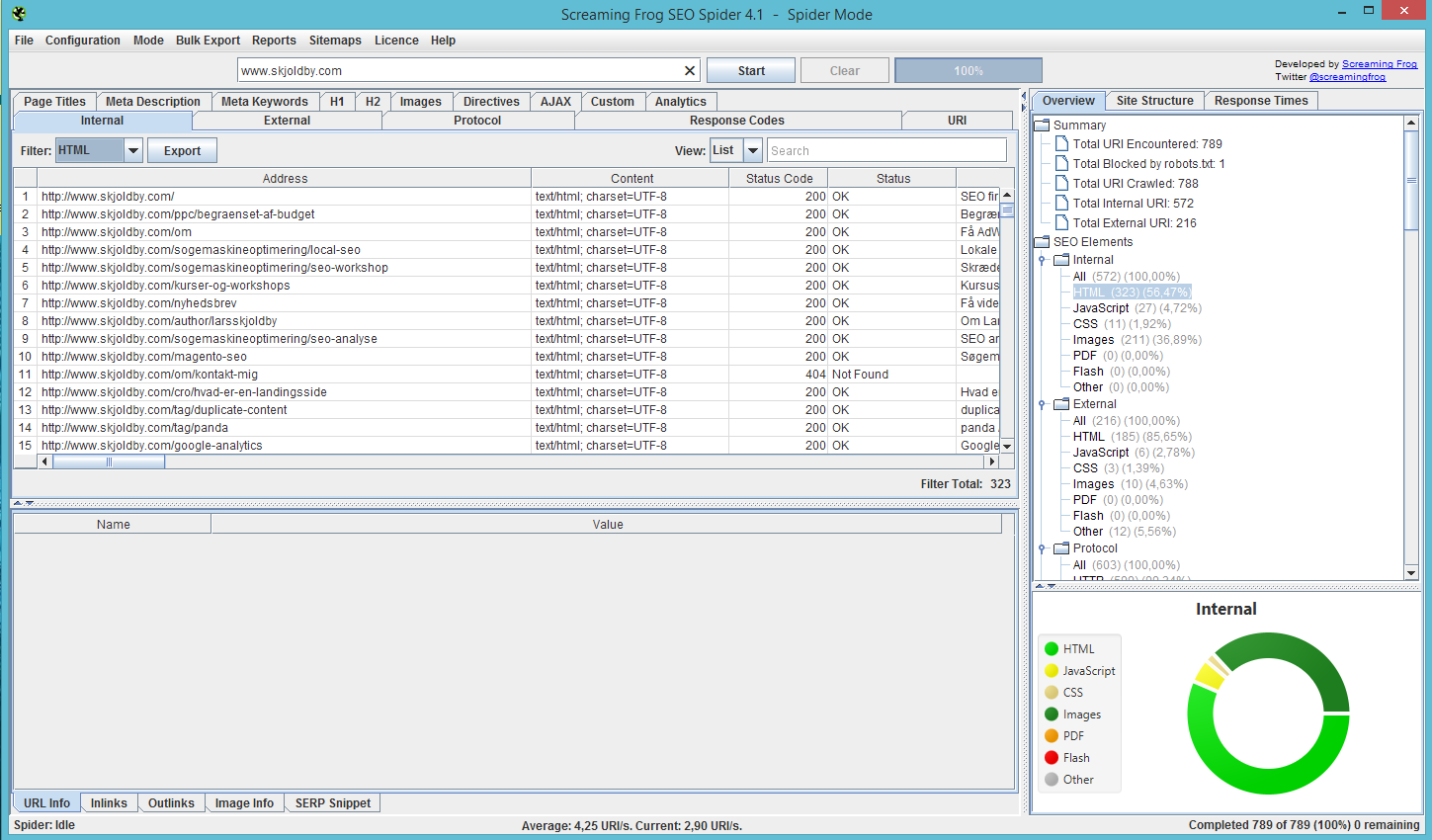

But this feature is only available in the licenced version of our software. You can still crawl the websites by changing user-agent (Config > User-agent) and switching to Googlebot, or Chrome etc. This appears to be some kind of ‘bot’ related check the server obviously performs, to ensure only real people visit etc. servers respond with a connection refused to a request from the Screaming Frog SEO Spider user-agent. I pinged Screaming Frog support and they replied 13:52:14,700 INFO - Writing report All Outlinks to /home/crawls/2018.09.20.13.51.43/all_outlinks.Hi folks, Screaming Frog is a utility program downloaded and run from one’s computer rather than installed on site as a plugin, like Yoast, which is also recommended in that same newsletter 13:52:14,695 INFO - Exporting All Outlinks 13:52:14,690 INFO - Spider changing state from: SpiderWritingToDiskState to: SpiderCrawlIdleState 12:51:11,841 INFO - Licence Status: invalid 12:51:11,841 INFO - Licence File: /root/.ScreamingFrogSEOSpider/licence.txt 12:51:11,839 INFO - Fatal Log File: /root/.ScreamingFrogSEOSpider/crash.txt 12:51:11,839 INFO - Log File: /root/.ScreamingFrogSEOSpider/trace.txt 12:51:11,838 INFO - VM args: -Xmx2g, -XX:+UseG1GC, -XX:+UseStringDeduplication, -enableassertions, -XX:ErrorFile=/root/.ScreamingFrogSEOSpider/hs_err_pid%p.log, =/usr/share/screamingfrogseospider/jre/lib/ext 12:51:11,838 INFO - Java Info: Vendor 'Oracle Corporation' URL '' Version '1.8.0_161' Home '/usr/share/screamingfrogseospider/jre' 12:51:11,836 INFO - Running: Screaming Frog SEO Spider 10.0 12:51:11,640 INFO - Persistent config file does not exist, /root/.ScreamingFrogSEOSpider/nfig > docker run -v /Users/mark/screamingfrog-docker/crawls:/home/crawls screamingfrog -crawl -headless -save-crawl -output-folder /home/crawls -timestamped-output -bulk-export 'All Outlinks' The example below starts a headless crawl of and saves the crawl and a bulk export of "All Outlinks" to a local folder, that is linked to the /home/crawls folder within the container. A folder of /home/crawls/ is available in the Docker image you can save crawl results to. You need to add a local volume if you want to save the results to your laptop. To accessĬreates a sitemap from the completed crawlĬreates an images sitemap from the completed crawlĬrawl a website via the example below. Names are the same as in the Report menu in the UI. Supply a comma separated list of reports to save. TheĮxport names are the same as in the Bulk Export menu in the UI. Having been an honorary frog since 2011, I've developed a keen interest in digital strategy, and understanding how search engines work and. Supply a comma separated list of bulk exports to perform. I'm SEO Director at Screaming Frog, leading a team of talented search marketers and content specialists to deliver best in class technical SEO, creative content and cutting edge digital PR for our clients. Specify the tab name and the filter name separated by a colon Supply a comma separated list of tabs to export.

Supply a format to be used for all exportsĬreate a timestamped folder in the output directory, and store Run in silent mode without a user interface Use Google Search Console API during crawl Supply a config file for the spider to use Start crawling the specified URLs in list mode

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed